AI and the Future of Work: A Year in Review with Daniel Rock

Today’s chat dives deep into the evolving landscape of work in the age of AI. We’re checking in with Daniel Rock, a Wharton professor and Expert here at Feedforward, to reflect on how our understanding of AI’s impact has shifted over the past year. It’s all about grappling with the reality of what AI means for jobs and organizations—are we headed toward a future where work is fundamentally transformed, or are there still plenty of opportunities for humans? Daniel’s got some fresh insights, examining whether the nature of jobs will change or if the fear of widespread job loss is overstated. We’ll also unpack the implications of these changes, not just for individuals, but for society at large. So, let’s get into it!

Takeaways:

- In this episode, we reflect on how AI is reshaping jobs and organizations, emphasizing that while job structures may shift, work itself will persist in various forms.

- The conversation highlights Daniel Rock's evolving insights on AI's impact, revealing a complex landscape where automation alters the nature of tasks rather than eliminating jobs entirely.

- We discuss the growing reliance on AI agents within workflows, marking a transition from single-task automation to the deployment of multiple agents in various roles.

- The episode underscores the importance of adaptability in the workforce as AI technologies evolve, suggesting that continuous learning will be crucial for future job security.

- A key takeaway is the idea that while AI can enhance productivity, it also raises questions about the future of work and the societal implications of reduced job availability.

- We explore the tension between optimism and concern regarding AI, noting that successful integration of these technologies hinges on thoughtful organizational strategies and employee engagement.

Transcript

Hi, this is Adam Davidson, one of the co founders of FeedForward. Today's episode is one I've been looking forward to. It's a check in conversation, a chance to ask where were we a year ago and where are we now?

My guest is Daniel Rock, a professor at Wharton and one of the most important thinkers. We turn to at feedforward to understand what AI is actually doing to work, to jobs, to organizations.

As I'm sure most of you know by now, he's one of our experts and someone I've been watching update his views in real time. He's moved in both optimistic and more unsettling directions. I think you'll find his framework genuinely useful. We have a first on this episode.

The very first second timer. So you are the exclusive member of the FeedForward podcast Second Timers Club. Welcome. Daniel Rock.

Daniel Rock:Great to be back.

Adam Davidson:Great to be back. Did you receive the jacket and all the paraphernalia befitting your status?

Daniel Rock:Nice. Is it like a master's jacket if you win the tournament?

Adam Davidson:Exactly, yeah. You get to decide it since you're the first. So you are, you're a professor at Wharton. I mean, I think most FeedForward members know who you are.

We turn to you to sort of understand the impact of AI on work, on jobs, on organizations. And this, obviously this is like the. One of the most important questions because it doesn't just touch on.

Probably many people think in terms of just, well, first off, my own job and then secondly, how is my organization going to work? How do I hire for this? Do I hire for this?

But then, you know, it then very quickly spins up into, wait, what is society if it turns out people don't get to have jobs?

Daniel Rock:So fully automated luxury communism. Is that what we're.

Adam Davidson:Yeah. Or you know, matrix, like batteries for the machines.

Daniel Rock:The safe state is good.

Adam Davidson:Yeah. I would summarize your prior view. It's much more subtle than this, but that broadly speaking, don't think in terms of there will be.

Nobody will have jobs, but maybe think in terms of the nature of jobs will change, but that the, the idea that nobody will have jobs is overstated. So is that, is that the right way to describe your view?

Daniel Rock:I think still, yes. Jobs will continue to exist. I've become concerned, well, just disciplining myself with my own data and processes. Right.

Like we go look at an organization and break it down roll by roll in terms of this set of tasks they've got and then try to think about like, where can AI help and where might it not. I was looking at our examples of things where AI might not help and with agents, I think they're starting to get unlocked.

So the set of things where I think AI might be useful in terms of knowledge work has certainly expanded and that I think I forget who was saying this around feedforward. Maybe it was you, Adam.

You know, if you're using a computer to do a task, then AI might have something to say about it or something to help you do there. So.

Adam Davidson:And that's something. If I said it, I stole it from Ethan Malik. But let me take. I'll take credit for it. He's not here.

There's a bunch of things you just said that I want to unpack a little bit. So the first thing you talked about was tasks.

And I think that's an important ground level thing to talk about, which is when you ask an economist about the word job, that's like asking someone about a bag of groceries or something. It's not. The job is not the thing itself. It is.

Daniel Rock:I'm going to have to steal that. That's so good.

Adam Davidson:Yeah, right. We don't say like how much food can you afford? Oh, I can afford three bags of groceries. That's not a meaningful.

And so a job is really a container of a bunch of tasks. That's a way to think about it. It's a little more complicated because it's a long term contract typically to a subscription to groceries.

It's a subscription to groceries. Right. So that allows us to get some intuitions about how work will change.

The mental model I always have is the TV show Mad Men, which feels like it's in the not too wildly distant past. But when you watch that show about the ad agency in the 60s, there's two floors of people at Sterling Cooper, the ad agency.

And I'd say the vast majority of tasks that you see people doing are now done by computers.

So there's secretaries typing letters, there's secretaries answering phone calls, there's draftspeople hand drafting illustrations, there's accountants using slide rules to calculate numbers. And there's like a very small number of people sitting in grand offices who get to have fresh ideas. So that to me is an actual. That's a happy story.

A story where technology comes along, it automates a bunch of processes.

Daniel Rock:It was a long run happy story. I think if you were a secretary as this stuff will happen depending on when you started that job might not be such a good story for you.

Adam Davidson:Right? That's true. It's a long. It's not clear. And in fact, that's a theme of the show is who is this good for? And who is it not good for? But.

But if we think of that, like, what do I do all day? Sometimes I type reports, sometimes I go to meetings, sometimes I have big strategic thoughts. Or maybe it's a more narrow task. Right.

Like we need 30 people who can assemble this part by grabbing things from this tray and things from this tray, whatever it is. So I think we get that. So you can look at the tasks like, okay, I create PowerPoints that are supposed to communicate things.

And AI either can or, you know, does or doesn't help me with that. Okay. So that's one thing. I have to have fresh strategic insights or I have to. Whatever. Okay, does AI help me with that? Right.

That's sort of a very rough description of how this works. So walk me through. Maybe walk me through where you were a year ago, and then let's get to where you are now.

Daniel Rock:You know, I. I expected to be surprised somewhat by things that would show up. So you kind of have to bake that in, as we've discussed many times. And then I think as you've discussed with other guests of the show.

So cursor, about a year ago was my main way of coding, and you had agent mode. Dan Shapiro is getting us to like, do the YOLO mode with agent or cursor composer, which means you just.

Adam Davidson:Right. I remember that being like a terrifying thing. So cursor, it's an ide.

It doesn't really matter what that is, but it's basically a way to write code and to. But we were just a year ago, we were just in the early stages of like, you know, you don't even have to look at the code.

You can just describe the thing you want built and then let this thing called an agent.

Daniel Rock:Yeah.

Adam Davidson:And I remember, and that was like a fresh idea.

Daniel Rock:Steve Yagi and Gene Kim, I was talking with them and Steve had this like, you know, you go from co pilots to, you know, agents to, like teams of agents to fleets of agents. And he was like, this is all coming soon. And I thought, wow, that's cool, but nobody does that.

And now like the full fleets of agents, the Gastown or, you know, equivalent, that's. That's kind of widespread now in certain communities. And I think it's still got quite a ways to diffuse.

Adam Davidson:Yeah. And I'm going to keep being annoying and just explain some things. So Steve Yegi, legendary computer programmer.

Gene Kim, kind of a legendary creator of frameworks for software development. They wrote the book Vibe Coding, which, to be honest, is just a love letter to Feed Forward. It quotes you a lot. It quotes Matt Bean a lot.

And Steve has created this kind of crazy contraption called Gastown, which is basically, you just tell it, I want something like this, and it runs off and does it. But behind the scenes, there's. And he really loves storytelling. So there's a mayor who allocates things to different city officials. And it's kind of.

I say this with love. It's kind of insane.

Daniel Rock:He would admit that, too.

Adam Davidson:He would admit that, too. And his first advice was, do not use this tool.

Daniel Rock:Which, full disclosure, I don't, because I listened to Steve there. I am not quite his fully leveled up programmer yet.

Adam Davidson:But for what it's worth, I have used it. And honestly, my complaint is it's too good. Like, it. It so thoroughly does the thing you want that I feel, like, irrelevant.

And I find that I just, in part because I'm still learning and I'm enjoying the learning process and in part because it's kind of weird to just say, I want this thing, and then it's sort of like, okay, you're not needed anymore. We're going to go do that thing anyway.

Okay, so just to be clear, a year ago, it's like Cutting edge Frontier was using one agent or one automatic thing, and people were telling you, oh, before long there's going to be fleets of them. And now, like every Claude code session, for example, can trigger dozens of agents without you even knowing it's doing it.

Like, there's something people say in economic history very often is like, your great grandfather could tell you every motor he owned, and the number might be one or maybe two, like his car and his tractor. Or maybe we don't. Like, you have motors like I'm looking at. I have a camera that has a motor.

I have, you know, I have, like, I probably have dozens of motors. When we were younger, we could tell you how many CPUs, how many computer chips we owned.

Now, I couldn't even tell you how many CPUs are within, like 3ft at any given moment, right? Watches and phones and cameras and microwaves and everything has a cpu.

So it, I think of that analogy, like a year ago, if you were using agents at all, you could tell somebody exactly how many agents you had.

Daniel Rock:Got no idea now.

Adam Davidson:And now you have no idea. And. And it's barely getting started, right? Like, we're, we're still in early stages, so that's a big learning for you. That's a big.

Daniel Rock:Yeah and you could kind of see it like it's not that I didn't believe Steve, I was just like I didn't, I couldn't see the form factor yet. And to his credit, you know he built the form factor at least the first one. Um, but yeah, that took like six to eight months to show up.

Um what I'm seeing now a little bit. You know I found the, the Norga's investment management feed forward session to maybe be the most impactful.

Am I thinking about the organizational technologies that need to show up?

Because if you think about this where everybody can build whatever software they want, that's a huge organizational problem where you have people throughout the company building things that are not maintained, they're not version control, they're not secure and they solved that by pushing engineers out into the rest of the org.

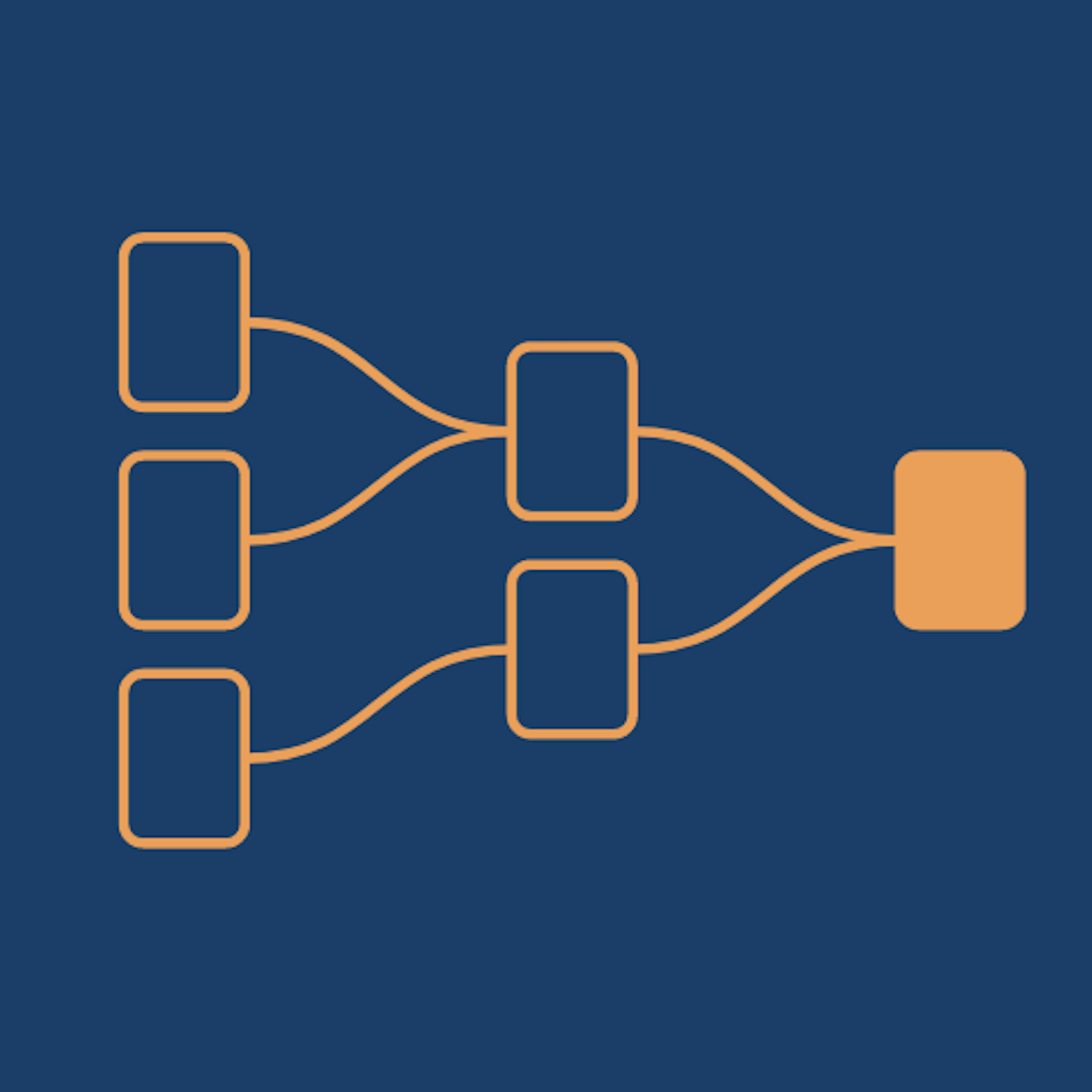

So it kind of if you think about the view of an organization top down where like you used to have a hub and spoke with technology in the middle now that's it's almost literally like the steam electric power transition.

You're having modularization of the technology org and people who do that well get enormous productivity gains Even without changing too much else I guess you still gotta get adoption. I think like to go with that. Every company I look at has a power law inside it.

It doesn't matter if it's which tool, which company, what the applicant like top 10% of users are roughly half the usage overall. They're people who just are figuring out how to work with this stuff. And you know that's, that can't be a long run equilibrium.

If you've got 10% of your company really ripping with this why wouldn't it propagate? So that's. So that's one set of things. I've updated another in the pessimistic direction. People just hate AI like all over the country and that.

Adam Davidson:Yeah I've seen public opinion polls, it's well below 50% approval. It's like not getting into politics but it's like and, and that is confusing, right? It is.

Daniel Rock:I think it's like it's a dangerous trend for our well being for people to be kind of captured but not.

Adam Davidson:AI but people's hate the deliberate politicization.

Daniel Rock:I can't say that word of AI kind of worries me a little bit but you know it's because they're good.

Adam Davidson:And just to call that out that I mean there are left wing people who love AI and right Wing people or Republicans who hate AI.

But broadly speaking, we are seeing, like, Bernie Sanders clearly positioning himself as, like, being the guy who's really worried about AI being a major part of who he is in the world. Obviously the Trump administration being very aggressively pro AI and seeing it as a huge growth.

Daniel Rock:But then you have the anthropic stuff too, and, you know, it's like.

Adam Davidson:Right, right. Like using state power to. Well, depending on your point of view.

Daniel Rock:It's just that AI is in a political conversation much more often left or right. I think that's kind of dangerous as opposed to, like, letting it be something that people learn to work with or that companies learn to figure out.

Adam Davidson:Yes, that makes a lot of sense to me.

I mean, it does remind me of trade, where I think academic understanding of trade and then the economic realities of trade bumped up against the political process in ways that have not always been ideal.

Daniel Rock:One might have hoped that wouldn't have happened, and I had hoped this wouldn't have happened with AI, but I suppose it is.

Adam Davidson:Yeah. And probably inevitable and for similar reasons to trade. Right.

If you have a technology that will play a key role in figuring out not only what, like what people win or fail, what industries win or fail, what regions win or fail, like, you know, of course it's going to get political. Like how? Yeah, yeah, that's.

Daniel Rock:It just happened a little sooner than I expected. Yeah.

Adam Davidson:The other thing is, like, you know, this is sort of gets to a lot of the work I've done in my life as. As an explanatory journalist, is trying to study something and understand it in nuanced ways. And the political system do not have the same processes.

So I feel like your work, like the vast majority of research into AI by thoughtful academics, there's a lot of subtlety there. There's a lot of gray. And politics really likes winners, losers, enemies, victims. And that just creates a layer of obfuscation.

I forget the exact numbers, but I remember when you gave a presentation into the feedforward members a year ago, I was surprised at how low the impact was. So, as I understand it, you were looking at these data sets that the US Government complies on.

What are the skills embedded in different types of jobs?

Daniel Rock:The tasks. Yeah.

Adam Davidson:Anyone can search this. Yeah, the tasks. What are the tasks? Rather, skills are different from tasks. It's the OES database, Bureau of Labor Statistics.

You can just Google bls, OES and type in what you do it so often, see how good it is. Like, some jobs were like in the 30% somewhere in the like 5 or 8%. Walk me through how you.

Daniel Rock: Or even this is:LLMs at that point in time could help you with as compared to if you built like, all the other systems you layered on other software. And we had some advancements, like what was possible.

And I actually, those numbers are holding up pretty well, so there's a few different layers to it. The right off the gate or right off the gate stuff is starting to happen. But that was a sort of lowish percentage.

I think it was like 14% of tasks in the economy. But if you were to like snap your fingers, get everything you need is well over half. It was like, you know, 54% or something of tasks.

And we're starting to get there. Right. Like some of those things are possible now. Which makes me worry about different things. Like, I don't know.

There's been two papers that are pretty recent that have sort of changed my thinking. Not in like a major way where, like, we blow up all the old frameworks or whatever, but two that I hold in very high regard.

One is this paper by Luis Garicanou, Jin Li and Yanhui Wu. It's called Weak Bundle, Strong Bundle. How AI Redraws Job Boundaries.

And this one is like, why do tasks exist together in a bundle for a job in the first place? Can you split some tasks out? What happens when AI is exposed? That's a really cool question. There's the old joke about Silicon Valley.

You either bundle or you unbundle, right?

Adam Davidson:The only way to make money is to bundle or unbundle.

Daniel Rock:And in times where technology is a little bit more stable, you get a lot more bundling for a bunch of reasons. But now with the. You can think of a job as like what economists would call an equilibrium object.

It's determined by supply and demand and coordination costs between tasks. Those coordination costs are changing, so we're going to get new bundles or changing bundles.

And this paper is the first I've seen that really, like, provides a guide to how that might happen. So that's great. And then there's this other paper on, like, O ring, like O rings within jobs. And if you.

For those who don't know an O ring production function or O ring model, production is like, you got as output the minimum of your inputs. So this is like the Challenger disaster. The O ring caused the failure of, you know, the space shuttle. It blew up.

Adam Davidson:So you're only as strong as your Weakest link kind of idea.

Daniel Rock:So if we think about the set of tasks, and this one is. So it's a Ganzan Goldfar paper. It's really nice. And it explains like, you know, in our work we did linear kind of combinations of tasks.

Like every task is equally important. It doesn't really matter, you know, exposure or potential for AI to enable what you're doing that sort of enters linearly into a bundle.

They're saying, no, some tasks are kind of like much more important than others and bottlenecking the rest of the job.

So if you combine those two things, we start to think like, okay, we're going to have to do an appraisal of what's really important in work and how to change it. And that's a conversation that hadn't really started in earnest a year or two ago.

People were aware of this in the econ community and the research community, but jumping the gun a little bit to say, like, oh, that's, that's happening. I don't think that was really happening. And now people are at least talking about it. So I bet you that's the next two to three years.

Adam Davidson:And let me see if I understand. Like, I think anyone you meet who has any kind of job, but I.

It's easiest in my mind to think of in terms of white collar jobs or knowledge work jobs. Like you get to the office at 9am or whatever, and then let's say you had a timer for every time you were truly adding value to the company.

I would guess just intuitively for a lot of people, you're lucky if it's several minutes in a day or maybe.

Daniel Rock:An hour, you just don't know which. Several days.

Adam Davidson:Right. That's the issue. Yeah, yeah, but like, this is something that came up. I write about this in my book the Passion Economy.

But in hourly billing, that lawyers or accountants or others, you might have an insight in five or 10 minutes that's transformative for a client. And then you might do a whole bunch of commodity work that any lawyer could do.

But if you're billing by the hour, it's like in the marketplace, every hour has the exact same value, Even though in 10 minutes, because you have a unique approach to thinking about taxation or corporate structure or whatever, you maybe save that client $100 million and then you had to write a bunch of contracts or you had to get a bunch of new law school grad story contracts at inflated prices. I also think of Kent Beck, another legendary programmer who tweeted about AI 90% of what I do is now a commodity.

But the 10% that remains is now worth way more than 10 times what it was.

Daniel Rock:That's an O Ring model. Exactly.

Adam Davidson:I think he was. That's an O Ring model. And part of this is just conceptual. Part of it is measurement too. Right. Like there was not.

We didn't have a timer of value add. We didn't have. For most jobs, we didn't have a good way.

Interestingly, I remember one of our members saying they do manufacturing and knowledge work and saying, for our manufacturing floor, it's much easier to count. Like, we can see how many people are on the floor, how much throughput is happening.

We can really measure even very subtle differences in productivity. But, like, what does it mean for a marketing person to be 12% more productive? Like, it's hard to know.

Daniel Rock:This has been a big thing for knowledge workers for decades where there's been no real measurability and in some place not as much accountability for that. Like, you could tell very quickly if a factory worker wasn't, you know, a line worker is not producing anything.

Harder to tell with a marketing analyst. Right. So I think that's going to be a really big reckoning. I actually expect that to be kind of painful.

You know, I, I experienced this actually in my first job. At first I was part of a bigger system. It was like the system performance is measured, but I wasn't individually. Like, it's trading stuff.

So they're like, we don't actually care about your trades, profit and loss because, you know, you're reducing risk, but you're also paying for it. So sort of like, can you buy it cheaply?

And then one day they were like, okay, actually we're gonna, we're gonna calculate the profit and loss of your trades that you're manually doing specifically.

And I thought, well, you know, if I'm paying for risk and I have this discussion with my boss, like, I don't want to get tagged, you know, and, and scolded for, for having negative profit or for having losses on my trades. If I'm making the system less risky, you guys got to tell me, like, how risky do you want this system to be?

And I'll try to hit that target, but you have to change your behavior as a worker when that kind of thing happens.

Adam Davidson: I think of in like:I think it was Brake liners and fuel injectors, I think. And then I went to a factory in China that was doing the exact same thing.

Like that was a time there was direct competition and the Chinese factory had so many more people. Like, it was just blazingly obvious. You, you walk into an American factory these days and you almost can't find people.

It's all this computer controlled machinery. And then like there's like one person in a white lab coat who's overseeing like 12 machines.

Whereas you walk back then, you walk into a Chinese factory and there it's like, like carnival. It's like just hundreds of people everywhere.

And I remember someone explaining, like at that time it has changed, but people are so cheap that it's better to hire 10 people and assume one or two of them will figure out how to be productive than to actually make sure it would cost more money to hire fewer people and make sure they're all being productive. And that's actually a measurement issue.

one of the, you know, from a: Daniel Rock:Yeah. You have this like this idea that investments and like investments and perfect knowledge. Right.

Of how your organization work are actually quite expensive.

There's a lot of executives who have a ton of trouble getting high quality signal from what's actually going, going on operationally for a variety of reasons. Um, yeah, that's some expensive things for referring.

Adam Davidson:So a year ago, we're largely in a world of chat.

We're largely in a world of like everything I get out of AI, I am asking AI to give me with like small whiffs of sometimes it can do its own thing for like 20 seconds, but not really to. Now we're in a world where dozens and hundreds of agents are doing all sorts of stuff. I don't even know how many agents.

So that has made you think, oh, maybe AI can do more tasks than I realize.

Daniel Rock:Yeah.

Adam Davidson:And then on top of it, we're getting. It sounds like a rapid richness in how we think about what a job is, which is an interesting outcome, but that makes sense.

Daniel Rock:Yeah. The organizational tinkering is starting to happen.

And I think if there's like a thing that I get an equivalent feeling about technologically now that I, you know, to what I had a year ago, it's sort of the open claw you know, text the agent or have it in your Slack thing like it's still early where like just like with fleets of agents. I'm like, there's some people who are doing this but I'm not quite ready to adopt it myself yet because of the security risks and the like.

I tinker with it but I haven't quite figured out the, the killer use case for myself. And I think probably that'll get figured out a year by now or like for a year it'll get figured out within.

Adam Davidson:I will tell you, I have figured it out.

It's totally obvious and you should just do it so you don't have to do it with OpenCloud but you should do like basically if you have, if you have a smarter system that knows more about you that is doing like that you're creating autonomous agents to just improve yourself. Think about how Daniel spends his time. What are better ways?

Read every academic paper knowing the framework of what Daniel cares about and tell me every day or every Thursday give me a report of what I missed and then on top of it you're able to prod that system with hey, I was just thinking about like the history of task measurement. Find a bunch of stuff out about it. Like you could be doing all of that. You don't need Open claw.

Daniel Rock:Yeah, I was going to say that these are things that I would write agents to do like with I would have Claude code or codecs do this but I think there is something special about that open Claude or related form factor like Dan Shipper and the every team like we, you know, we talked actually you're right.

We had a feed forward session with those folks that, that thing seems special to me and I still don't understand exactly how or why, but I think it could create some changes.

Adam Davidson:So I described a Dan Daniel Rock centric. But if you then had it also in your conversations with others.

So whether that's corporate Slack or that's you have a bunch of academic friends who you might write papers with and you have a group chat and telegram with them or whatever. To me that's the next magic thing that it, it can facilitate communication with others in all sorts of interesting ways.

Like it can be a fact checker, it can be a just a thought extractor.

Like hey Daniel, you and Alex Emas were talking about this thing and you don't realize it actually relates to this thing you were talking to Ethan Malik about. And you know, and here's a paper that relates to that. Whatever. I think you could be there I don't think you need OpenCloud.

Daniel Rock:Yeah. Something on the C side of the oft forgotten ICT as opposed to just it. Right. Like the communication and.

Adam Davidson:You mean information communication and technology.

Daniel Rock:Information communication technology. Yeah, exactly. So I think the communications partners. Yeah. And that leads to, as you were saying, Cosian considerations like the size of the firm.

Everyone's like, oh, we can have one person enormous firms. You can also have much bigger coordinated firms between AI and people.

This is like the Eduard Talamas, Enrique Ide view of the world, where I'd imagine the distribution of firms gets a lot more spread out in terms of how many people work there, the scope.

Adam Davidson:Of what they do, this whole question of even why are there firms at all? Which Coase set out to ask, like, hey, wait a second, we're capitalists.

We believe that markets where price is constantly adjusting to reflect supply and demand, we all agreed that's what we're into. And suddenly the most successful manifestation are these top down, heavily controlled organizations that are completely blunted.

It's not like someone goes to work at Target every morning and they're like, oh, real estate costs in St. Louis have gone up, so your salary has fallen by 12% sense. And oh, good news. We just found out that this input.

Daniel Rock:To our lives in a fallen world.

Adam Davidson:Slightly chi. Yeah, that literature basically concludes.

This is my very cheap way of saying it is corporations are an utterly ridiculous, wasteful thing, except that they're completely necessary. And that doesn't mean that every version of them is necessary or that they don't change. All right?

Getting to, as our grandparents would say, getting to the tacless, to the details. So we talked about one pretty picture. A pretty picture is agents are facilitating more conversation.

Agents are allowing each of us to spend more of our days doing the things that we're uniquely good at doing and creating manifestations of that out in the world. Just like in my Mad Men example, I can come up with an ad campaign.

But now instead of needing a draftsman to draft it up and a copywriter to write it, I can just generate it. And it's now, you know, and then I can use digital technology to spread it all over the world.

A darker version is we just don't need that many people. There's not that many people who can add that kind of value. Is that the right darker version of the world?

Daniel Rock:No, I don't think so. I'll tell you why most of it comes, if you really go down to it, from the unlimited wants.

Like, we can always make things Better, you know, marginally for people. And maybe the hop from no air conditioning to air conditioning is bigger than the hop from. I no longer draw my ad campaign.

I describe it to an AI model, right? But what I've been thinking about a lot lately is like science.

And I think science and science productivity is maybe informative for how the rest of work would go.

So we have this process right now where if you want to be like a quote unquote serious scientist, you go do a graduate degree, you learn how to contribute to the knowledge base in a very probably arcane and bespoke language for your field. And then it gets locked up in these papers as like an object that says this is what a research is. And yeah, that is inaccessible to a lot of people.

So Ben Jones and others have written about the burden of knowledge where like gradually, as we add stuff to become an expert that can actually operate at the frontier. It's a longer truck and I imagine so first to first order, right?

AI, if it facilitates discovering new things, it could make, it could blow up how wide that frontier is. There could be lots more places where people doing research, it could expand it.

So you have to know way, way more in fact, in places where the engineering outstrips what we actually know from a scientific perspective. So you're imagining like I gotta chase really far out there to start contributing to knowledge. And I also have to learn a ton.

So I think we need tools that are sort of what I call buses to the frontier. Instead of the usual manual effort, we have to figure out how to get people trained up in a way that they have expertise that's actually valuable.

And I like that David Otter and Neil Thompson paper about expertise in AI. I think that's a really cool line of inquiry. How do we get people expertise? It may be that we, we have people spend a lot more time in school.

I'm not sure. Everybody's super excited about that. There are people like me who probably spent way well, definitely spent way too long in school, but my 14 year.

Adam Davidson:Old definitely does not like that idea.

Daniel Rock:So your 14 year old is going to say, what do you mean? I have another 14 years of this? And the answer can't be forever. Like, let's just keep adding education.

When AI can do all this stuff, the answer's got to be like, let's figure out a new way of thinking so that people can become rapidly expert something and kind of know some unique questions to ask and build on. So I think that's how knowledge work transforms.

Now if you want to take a pessimistic view of that, you might doubt that it's possible for one, like, what does that even mean to change how we train people there? I haven't come up with a concrete plan for that by any means.

You might think that it's a smaller proportion of the population relative to like say everybody goes to college right now that can actually participate in that kind of economy. So it's a lot less equal and we get more of the polarizing skill bias, technical change we've had for, you know, 30, 40 years or.

Adam Davidson:And that could be like, you know, wealth and income inequality, but that can also be like personnel. You know, the. The example that comes to my mind is textile mills. Like for most of the 20th century and 19th century, America's like core a lot.

Well, not most of the 20th century, but into the 20th century.

s, even early: em in another machine. By the:Like even the most basic introductory job required some ability to use computers to understand numbers, things that were just beyond him. And he didn't change, just the economy, no longer needed that person.

think of a factory in say the:But now you really do need to leave the workforce, go get at least an associate's degree in CNC machining or something, and then reenter the workforce. So there's an opportunity that not everybody has, but doesn't have the money. But then there's another issue that is not everyone has the proclivity.

Like, there's not a job. For every proclivity, you need a certain kind of agency. I remember being on a factory floor and saying, could I get a job here?

And the guy's like, my guess is you're not going to be very good at visualizing multi turn spindles in three dimensional space and figuring out how to translate what needs to be done into code. And I don't want to spend two years training you and find out you suck at it.

So that's a very long winded way of saying, you and I would love a world where we're like able to research more, learn more, explore more. But that might not be what everyone's good at or wants to do.

Daniel Rock:Yeah, so you have to make it easy for folks, I think. Yeah, a lot of people would get excited if they could convert their ideas into real things super quickly and see what happened.

And then a lot of people have. They derive their joy in life from other places and work is not really that important to them as like the, the main thing that they do.

I, I don't think people should be like radically penalized for that just because of the march of technology per se. You know, we, we got to figure out a way to, to make that kind of work or you know, just in general a different set of life choices acceptable.

I worry about folks who would get displaced into that or I worry a little bit about the sense that like, you know, you just can't learn what you need to know for a lot of people to, to help contribute.

So that's kind of, if you think that machine intelligence is like radically cheap, say in three, four years at least, of the kind of, the kind we have intelligence, human intelligence is, tend to be complimentary to each other. If you get a lot of smart people together, they tend to do better things than what any one person would do.

I would imagine it's going to be a similar story for humans and machines and even machines and machines, but that, that's a recipe for a smaller group kind of agglomerating and running away and not a recipe for sort of shared prosperity.

If like, if you get like an intelligence firm, right, something that looks like anthropic or OpenAI, what does that mean for the rest of the economy if you're a manufacturing firm or something like that instead?

Adam Davidson:And we have seen this story, right, like with trade, as I understand, like the David Autor literature and others, that global trade and automation eliminated something we now call the manufacturing premium, which was two people with roughly equal skills and education and background.

If one of them goes to a factory until sometime in the 70s or 80s, they were going to make more money than if they went into say, shoe sales or whatever it was. And as I understand it, roughly speaking with manufacturing, it's not that manufacturing collapse, it's that manufacturing employment collapsed.

We still make a lot in the US but we just use a lot fewer people. That roughly speaking, two thirds of what used to be the middle class actually improved as a result of trade and technology.

One Third fell in income and opportunity. But the one third that fell fell so much further than the two thirds that rose that the average fell.

Daniel Rock:I think on average trade was good, like wealth creating. But even if the gains were sort of unevenly distributed,.

Adam Davidson:I think that is the argument that it was. If you look at the economy, it was good. A huge percentage of Americans have no wealth. And so I'm pro trade. I'm not sure.

Yeah, but it's what economists call distributional consequences. So one version of the future is there are just fewer jobs. Another version of the future is there's the same number of jobs that, that's not.

But just some percentage of them pay a lot less than would be other.

Daniel Rock:Yeah. And more will be demanded of us. I think the, the educational system that we have is probably ill equipped to adjust to this quickly enough.

And you know, in the industrial revolution, the way that people were schooled or the requirements for their education where were very different. I see some things kind of going the wrong way too with education these days.

But you know, perhaps one thing, this is more the domain of sociologists than anything I would understand.

Well, like if we can make teachers a little bit like higher status, higher compensation, like prioritize what they do for students if human capital becomes that important. It's not that it's not important, it's just that. But I think our system was structured, you know, on an older baseline of what was going on.

Maybe we need to do something to help people get to the point where they're like really, really good at an expert at something that they chose when they're 14 and they work on that. Right. For a little while and then maybe they change their tactics five, six years later and that's.

It's possible to get to their frontier in that thing super quickly as well.

Adam Davidson:So though sometimes I think almost the opposite that you know, I look at my 14 year old son and he's really interested in like philosophy and trying to like big ideas. And I'm like that might be good. That might be better than becoming like a really qualified accountant or engineer.

That knowing how to turn vague hunches into expressible ideas in a clear and easily communicated form.

Daniel Rock:I think philosophy majors do pretty well across all liberal arts majors especially. But there's something special about philosophy. The reasoning involved, the clarity of thought.

Adam Davidson:Like you're saying, okay, here's what I'm thinking of a change that I'm noting in you, which might just be the time of day or something, but that you seem more uncertain than you were a year ago to me.

I'm hearing you describe a picture that strikes me as hazier to you than the picture you described a year ago, which is probably a sign that you're a good thinker and academic, that when the facts change, you change your mind, and when the facts are unclear, you're less confident. Does that feel right?

Daniel Rock:I think that's probably right, yeah. I think the more you learn in any area, the less confident you are about many things. I have learned quite a bit in the last year. I think we all have.

And I'm kind of like, oh, wow, there's. There's many, many questions out there that still need answering. And I know. I know less than I. I thought I did. Which for some.

It might sound negative to some people, but I'm actually thrilled about that. Like, cool.

Adam Davidson:Yeah. But that. That might be the test. By the way, if uncertainty feels exciting.

Daniel Rock:Yeah, I'm definitely excited.

Adam Davidson:Then you're probably going to do relatively better in the future. But if uncertainties feels terrifying, that might be a bad.

Daniel Rock:Yeah, sure. I mean, uncertainty in the form of, like, you know, will something blow my house up tomorrow or not is. I think it's. It's. I have.

I have a very comfortable form of uncertainty here, where it's like, what cool question do I get to talk about with my buddy Adam, you know, in next year's podcast?

Adam Davidson:Right. And what are all the things? Right, because we're talking, like, agents. Like, we're done. Like, we've figured out what AI is. It's a bunch of agents.

But, you know, a year ago, we would have said MCPs are going to be the big thing, and now MCPs are fine, but they're not, like, the hot red center of AI. And we also don't fully know what an agent is.

And it's not clear when I use the word and you use the word that we're describing the same thing and we don't know the. You know, I sometimes ask myself, like, is it even going to be meaningful, the number of agents I have? Or are agents just going to be.

Daniel Rock:Spun up and you won't even know they're there?

Adam Davidson:Like, am I? Can everyone even know they're there?

Daniel Rock:You're just going to hear a chorus of, you're absolutely right, like, running in your head from the little beat they stick behind your ear as the AI builds into it.

Adam Davidson:The other thing I'm noting is when I ask you to help us think through what the future holds, the actual technology is a relatively small part of the Equation. It's key obviously. And if we are entering the hypergrowth stage with recursive self improving LLMs, that will have impact.

But really what you're talking about, even agents are not. I wouldn't call those a technology in the sense of Opus 4.6. Is this much better than Opus 4.5? Rather, it's a, it's a, it's a mental framework.

It's an idea of how to use.

Daniel Rock:Like a form factor.

Adam Davidson:Yeah, a form factor. And, and we, we definitely don't know a lot of the form factors that are coming.

Daniel Rock:Which you guys were saying too, by the way.

Like, you know, Ethan, you lilac, you're like, there's no way that the chat interface is going to be the right form factor or like the command line will long run be the right form. Like something will change.

Adam Davidson:Something will change and is changing and maybe something will just continue.

You know the day someone told you about TCP ip, you weren't like, oh, I bet my kids are going to be doing something called Snapchat where they're taking pictures of them.

Daniel Rock:Yeah. And my sending it to my pigeon messenger Internet service at that point I was like, well that just tcip, tcpip. So much better.

Adam Davidson:Yeah. The other thing, and this may be the most important, is a lot of the implications are going to come down to it.

Sounds to me like human decisions and kind of human proclivities like tend to nudge towards optimism about the impact of AI on work. But that might just be because I can tell a good story about myself.

I don't know that I can tell a good story about every single person, but a quote that did kind of punch me in the chest was someone saying, AI won't steal your job. C suite that thinks AI will steal your job will destroy your job.

Daniel Rock:Yeah.

Adam Davidson:So the way we organize work, the way we kind of organize ourselves, the way we respond politically or socially to this new tool, like this is a book being written. We're living through history and we are participants. We're not just observers.

There's not like some secret thing in a data center somewhere that's going to tell us the future of work. The future of work. We've seen the future of work and.

Daniel Rock:It is, yes, that's my joke that usually falls flat is the future of work is more work, but the type of work, the choices we make. There's tons of bottlenecks everywhere too. So I go slowly.

I think one closing thought I might have on this is like there's this really nice entrepreneurial strategy framework. This is another Joshua Gan's thing. But not just him. It's Aaron Scott and Scott Stern, too.

So they have this idea like, do you fit inside a value chain? Do you build your own brand new value chain? There's other choices there. You might think of a risk with AI.

Adam Davidson:Aaron Scott.

Daniel Rock:What's that?

Adam Davidson:Did you say Kristen Scott? Did you say Kristen Scott? Aaron Scott.

Daniel Rock:Aaron Scott. Yeah. Scott Stern. Aaron Scott and Joshua Gantz.

Adam Davidson:You know, in my book, I have, like, a whole chapter on their thinking. I'm the leading Scott Stern expert in America.

Daniel Rock:We've discussed it. And Scott Stern is a wonderful person.

Adam Davidson:Yeah. I know more about him than he does. Yeah.

Daniel Rock:So there's this, like, you know, the entrepreneurial strategy compass thing they have. It's like, you know, you've got value chain strategies where you sit inside someone's value chain or you disrupt it.

And, like, if you're disrupting it, it's got to be really quickly executed. It's like, take a hill. Or if you're collaborating with folks, you might sell IP instead of being in their value chain.

And then there's kind of like, I've got brand new IP and I'm going to completely disrupt everything. And that's like their. I think they call it the architectural strategy.

You might worry with AI, if it moves too quickly, that the people building it and the choices that are made are no longer, let's fix the existing economy in some way, but let's rather, let's build an entirely separate external economy that maybe trades with the one that exists, and that could get dystopian kind of quickly.

Adam Davidson:I mean, that's something like I've hinted at some of our members about, and I'd love to explore more is I feel like every big company should have a little red team that's figuring out how to destroy that company using AI and then extracting lessons from that and not starting the. For sure.

Daniel Rock:They're like, you got to pay those people in a lot of equity.

Adam Davidson:Yeah, yeah. Right. I mean, that's like. Yeah, right. Like every. Right. Every small nation should start building nuclear weapons. Maybe I should shut up.

All right, Daniel, thank you for that exciting but frankly, fuzzy picture. Although in the fuzziness. Fuzziness is better than, oh, we're all screwed.

Daniel Rock:So it's fuzzy. Yeah. I think tomorrow will be sort of similar to today, and then, you know, six months from now might be kind of different.

Adam Davidson:Yeah. And six years from now will be very different. And in ways I hope we find exciting.

Daniel Rock:Definitely. Well, thanks, Adam. Always great talking with you, man.

Adam Davidson:Daniel and I could obviously talk for hours, and I hope we do. You can find him on the Discord. He's an active member and the kind of person who will actually engage with you there.

If you have questions, reactions, things from your own organization you want to pressure test against his framework, that's the place, as always. I'm Adam Davidson, and this is the FeedForward podcast.